ExCyTIn-Bench is an open-source Microsoft benchmark that evaluates AI agents by simulating realistic, multistage cyber threat investigations within an Azure SOC using 57 Sentinel log tables and incident graphs. It measures step-by-step investigative reasoning and tool use to help organizations select and improve AI-powered security features. #ExCyTIn-Bench #MicrosoftSentinel

Keypoints

- ExCyTIn-Bench simulates real-world SOC investigations in Azure using 57 log tables from Microsoft Sentinel and related services to reflect scale, noise, and complexity.

- The benchmark uses bipartite alert-entity incident graphs as ground truth to generate explainable question-answer pairs and evaluate investigative strategy quality.

- It provides fine-grained, step-by-step reward signals for each investigative action rather than simple binary success/failure metrics.

- Microsoft uses ExCyTIn-Bench internally to evaluate and improve AI features across Microsoft Security Copilot, Microsoft Sentinel, and Microsoft Defender.

- ExCyTIn-Bench is open-source, enabling researchers and vendors to benchmark, compare, and improve models collaboratively; personalized tenant-specific benchmarks are planned.

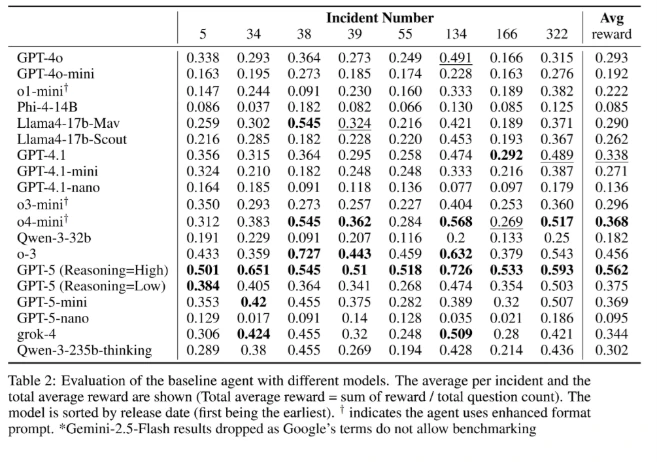

- Recent results show advanced models (e.g., GPT-5 High Reasoning) and CoT-enabled smaller models (e.g., GPT-5-mini) achieving higher rewards, highlighting explicit multistep reasoning as essential.

- Open-source models are narrowing the performance gap with proprietary models, increasing accessibility of high-quality security automation.

MITRE Techniques

- [T1592] Gather Victim Host Information – Used as part of multistep investigations where agents query live log tables to collect host-related evidence (‘agent queries live log tables, transitions across data sources, and plans multistep investigations’).

- [T1604] Search Open Technical Databases – Agents construct advanced queries across multiple log tables and Sentinel data to find indicators and context (‘AI agents are challenged to analyze noisy, multitable security data, construct advanced queries, and uncover indicators of compromise (IoCs)’).

- [T1610] Deploy Container – Simulated SOC environment in Azure hosts agent experiments and tool integrations for realistic testing (‘positioning the agent within a controlled Azure SOC environment: where the agent queries live log tables’).

- [T1622] Data from Network Shared Drive – Investigation graphs link alerts and entities across data sources, reflecting evidence synthesis across logs and storage (‘Incident or Threat Investigation graphs portray multi-stage attacks by linking alerts, events, and indicators of compromise (IoCs) into a unified view’).

- [T1805] Execution through API – Agents interact programmatically with Azure/Sentinel log tables and security product APIs during investigations (‘the agent queries live log tables, transitions across data sources, and plans multistep investigations’).

Indicators of Compromise

- [Log Tables] Source data for investigations – examples: Microsoft Sentinel log tables (57 tables referenced) and related Azure service logs.

- [Investigation Artifacts] Derived IoCs from simulated scenarios – examples: alert-entity links in incident graphs and uncovered indicators of compromise (generic examples described, no specific hashes/domains provided).