Local models preserve data privacy but introduce supply-chain security risks because downloaded model files (often pickled) can execute arbitrary code and fine-tuned weights can hide sleeper agents that trigger on specific prompts. Mitigations are simple and effective: download from verified providers on Hugging Face, prefer SafeTensors format, and verify model hashes to eliminate the vast majority of threats. #Pickle #SafeTensors #HuggingFace #DeepSeekR1 #PyTorch

Keypoints

- Local models keep data off remote servers but do not prevent malicious code from entering your machine.

- Python’s pickle format can embed and run arbitrary bytecode when a model is deserialized.

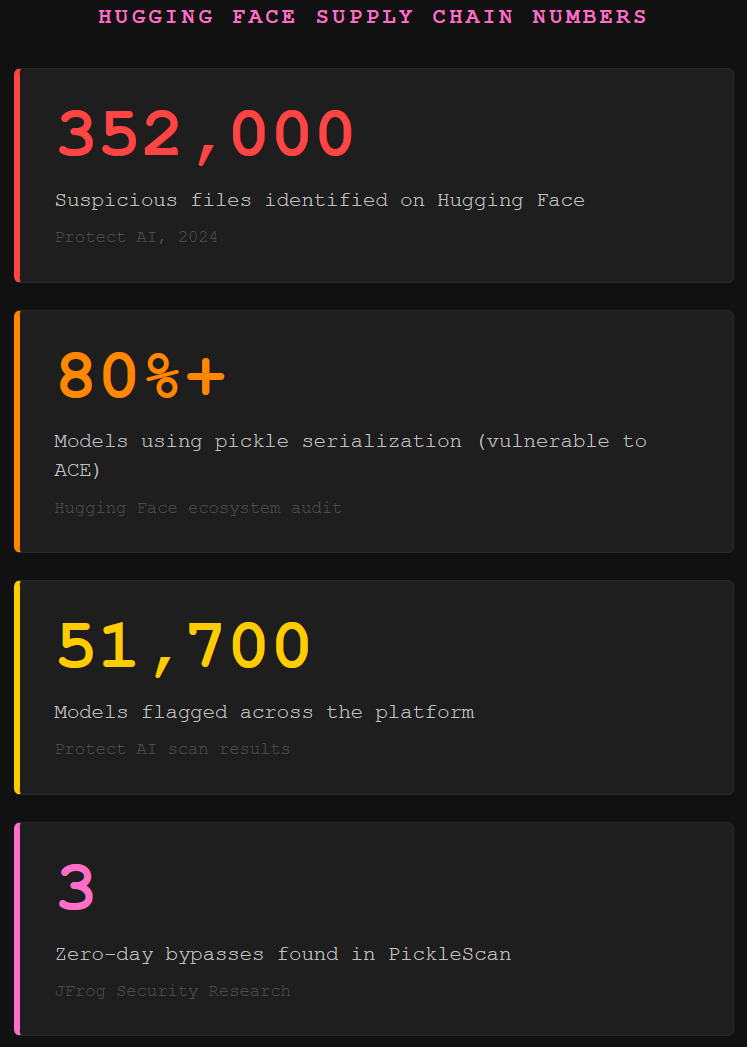

- Supply-chain scans miss many malicious models; Protect AI found hundreds of thousands of suspicious files and many models use pickle by default.

- Fine-tuning can embed sleeper agents that activate on specific triggers, producing insecure or backdoored outputs.

- Use verified providers, only download SafeTensors, and verify hashes to remove roughly 90% of the attack surface.

Read More: https://www.toxsec.com/p/is-your-local-ai-model-backdoored