This post evaluates using large language models to extract and contextualize information from CTI reports, turning narrative into structured JSON entities and a unified knowledge graph for downstream defense workflows. It describes a three‑phase workflow (sanitization, LLM extraction, knowledge‑graph assembly), experimental results across multiple LLMs, and operational trade‑offs in accuracy, abstention, ensembling, and data‑model design. #GPT4_1 #ClaudeSonnet4_5

Keypoints

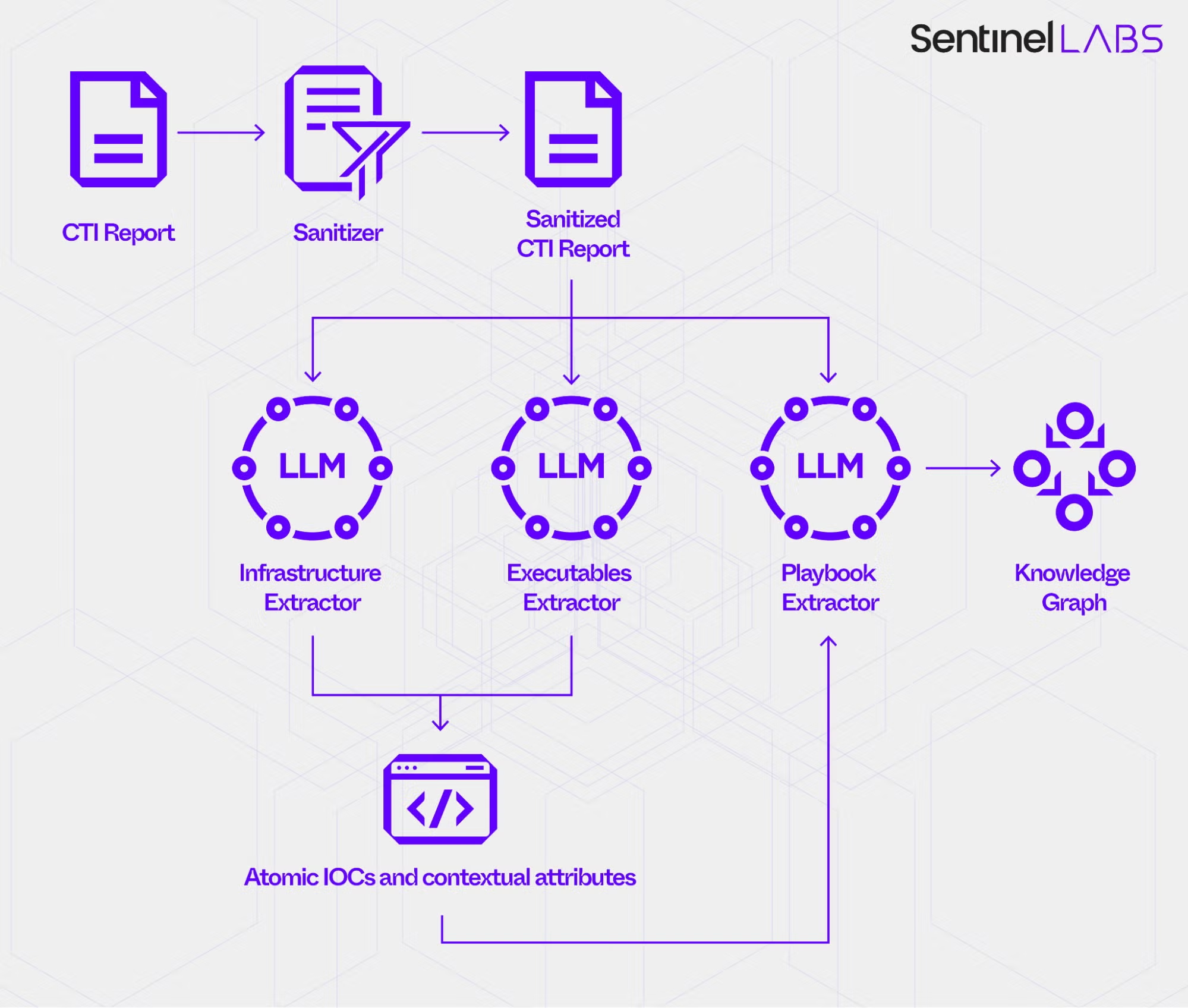

- Proposes a three‑phase CTI extraction pipeline: report ingestion and sanitization, LLM‑based extractors (Infrastructure, Executables, Playbook), and knowledge‑graph assembly linking IOCs, steps, playbooks, and threat actors.

- Uses custom extractor data models (including 12 IOC contextual attributes) and structured prompts with an evidence‑grading policy (High/Medium/Low) and an explicit abstention class (None) to constrain inference.

- Evaluates off‑the‑shelf LLMs (GPT‑4.1, GPT‑5, GPT‑5.2, Claude Sonnet 4.5, Claude Opus 4.5) on a ground truth of 343 atomic IOCs and 1,859 labeled IOC attribute instances, emphasizing feasibility rather than definitive model ranking.

- LLM extractors delivered large speedups versus human analysts (human baseline ~41 minutes per report; LLMs ~3.3 minutes on average, >18× speed‑up) while trading off coverage and correctness depending on settings.

- Extraction quality depends strongly on report formatting, label/context cues, OCR coverage for embedded images, prompt‑model fit, and deliberate data‑model wording that guides LLM attention.

- Discusses ensemble strategies, abstention error metrics (FDR, FNR), and the need for representative, flexible ground truth that can capture genuine ambiguity via multiple valid labels.

- Recommends deliberate operational planning, continuous evaluation, and tailored data‑model and prompt design to balance accuracy, coverage, latency, and downstream reliability.

MITRE Techniques

Indicators of Compromise

- [Domain ] selective extraction of attacker‑owned versus benign domains – malicious-example[.]com, attacker-domain[.]net

- [IP address ] infrastructure indicators described in reports and IOC tables – 192.0.2.1, 198.51.100.23

- [File hash ] executable identifiers (MD5, SHA‑1, SHA‑256) for malicious or attacker‑used binaries – d41d8cd98f00b204e9800998ecf8427e (MD5), e3b0c44298fc1c149afbf4c8996fb92427ae41e4… (SHA‑256), and 2 more hashes

- [File path ] extracted open‑text attributes for reported artifacts on host systems – C:WindowsTempmal.exe, /usr/bin/evil

- [Command line ] command‑line arguments associated with executables – mal.exe -s -c, python exploit.py –target 10.0.0.1

- [Injected process ] names of processes reported as injection targets – explorer.exe, svchost.exe

- [Certificate fingerprint ] pivotable artifacts used for correlation and blocking decisions – SHA1:AB:CD:EF:12:34:56:78:90:AB:CD:EF:12:34:56:78:90, cert-fingerprint:12:34:56:78:90:AB