The article describes a serial, multi-agent pipeline that treats each reverse-engineering tool (radare2, Ghidra, Binary Ninja, IDA Pro) as an independent, skeptical analyst to cross-validate findings and reject decompiler artifacts and parsing errors before report synthesis. It also explains why deterministic bridge scripts were chosen over the Model Context Protocol to reduce latency, non-determinism, and token costs, and documents token economics, tiered model allocation, and operational lessons from early runs. #WizardUpdate #SysJoker

Keypoints

- Single-tool LLM analysis is unreliable because decompiler artifacts, dead code, and parsing quirks in outputs (e.g., mangled strings, compiler stubs) propagate into confident but incorrect reports.

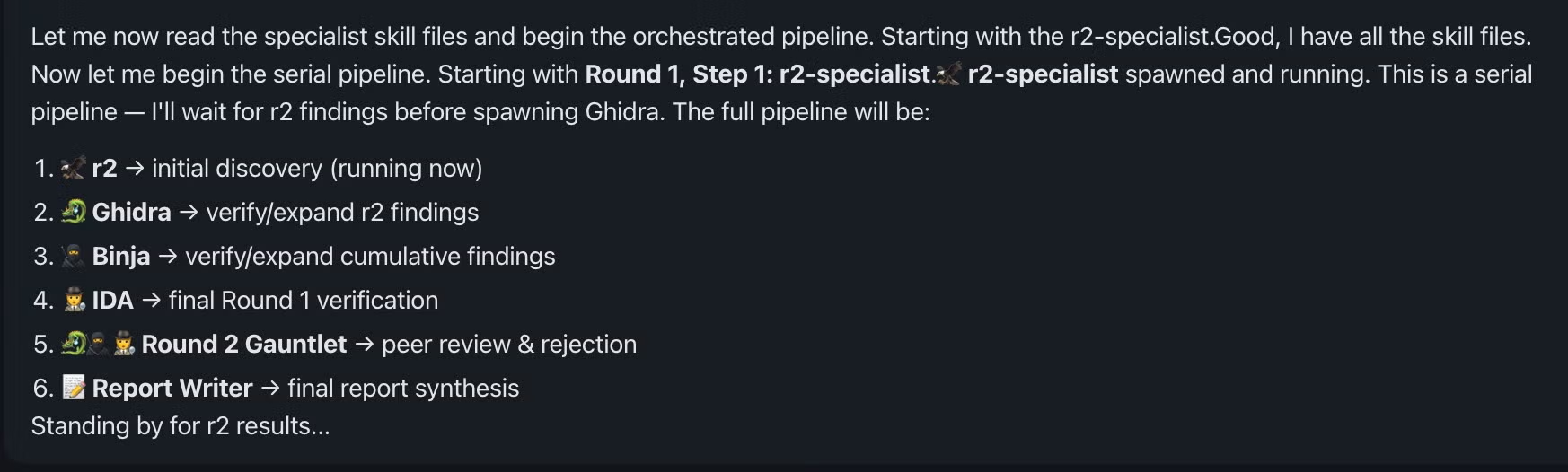

- The system uses a serial consensus pipeline on OpenClaw with an Orchestrator and four tool-specific subagents (r2 → Ghidra → Binary Ninja → IDA Pro) that pass a Shared Context in-memory between rounds.

- The pipeline runs in three phases: initial extraction, an adversarial “Gauntlet” peer-review round where agents must AGREE or DISAGREE, and a final report-writing stage anchoring claims to virtual addresses and decompilation snippets.

- An Active Rejection Mandate requires subagents to explicitly reject incorrect claims and record rationale, preventing artifacts (e.g., mangled C2 strings, decompiler hallucinations) from reaching the final report.

- Deterministic bridge scripts that dump comprehensive tool outputs to disk were chosen over MCP to ensure deterministic extraction, lower latency, predictable token usage, and better prompt-caching economics.

- Tiered reasoning assigns stronger models to the Orchestrator and report-writer and cheaper models to subagents, balancing cost and reasoning capability while handling rate-limit and concurrency constraints.

- Operational lessons included normalizing subagent output schemas, adding exit-code propagation to bridge scripts, capping concurrency to avoid rate limits, and preserving state in the Orchestrator to recover from dropped subagents.

MITRE Techniques

- No MITRE ATT&CK techniques explicitly mentioned – The article does not reference specific MITRE technique IDs or names.

Indicators of Compromise

- [File Hashes ] sample hashes tied to named samples – 60c8128c48aac890a6d01448d1829a6edcdce0d2 (WizardUpdate), 78aa572faa73f6873d24f24e423d315e7eb2c2d (Go Infostealer), and 2 more hashes.

- [Strings ] C2 and string artifacts observed in analysis – ‘/api/req_res’ (radare2-mangled), ‘/api/req/res’ (correct extraction), and misclassified Go runtime strings initially mistaken for a Tor .onion address.