The article outlines security risks and operational best practices for running AI and ML workloads on Kubernetes and Oracle Cloud Infrastructure (OCI), emphasizing the shared responsibility model and the need to secure data planes, GPU nodes, inference services, and supply chains. It reviews recent AI-targeted incidents and promotes runtime protection, CI/CD hygiene, and integrated solutions such as Sysdig Secure with OKE to provide real-time detection and response. #ShadowRay2_0 #OCI

Keypoints

- AI/ML workloads are standard software workloads running on compute, storage, and networking platforms and are commonly deployed on Kubernetes and cloud infrastructure such as OCI and OKE.

- OCI’s shared responsibility model: Oracle manages the Kubernetes control plane while customers are responsible for data-plane operations, worker nodes, workloads, networking, and application security.

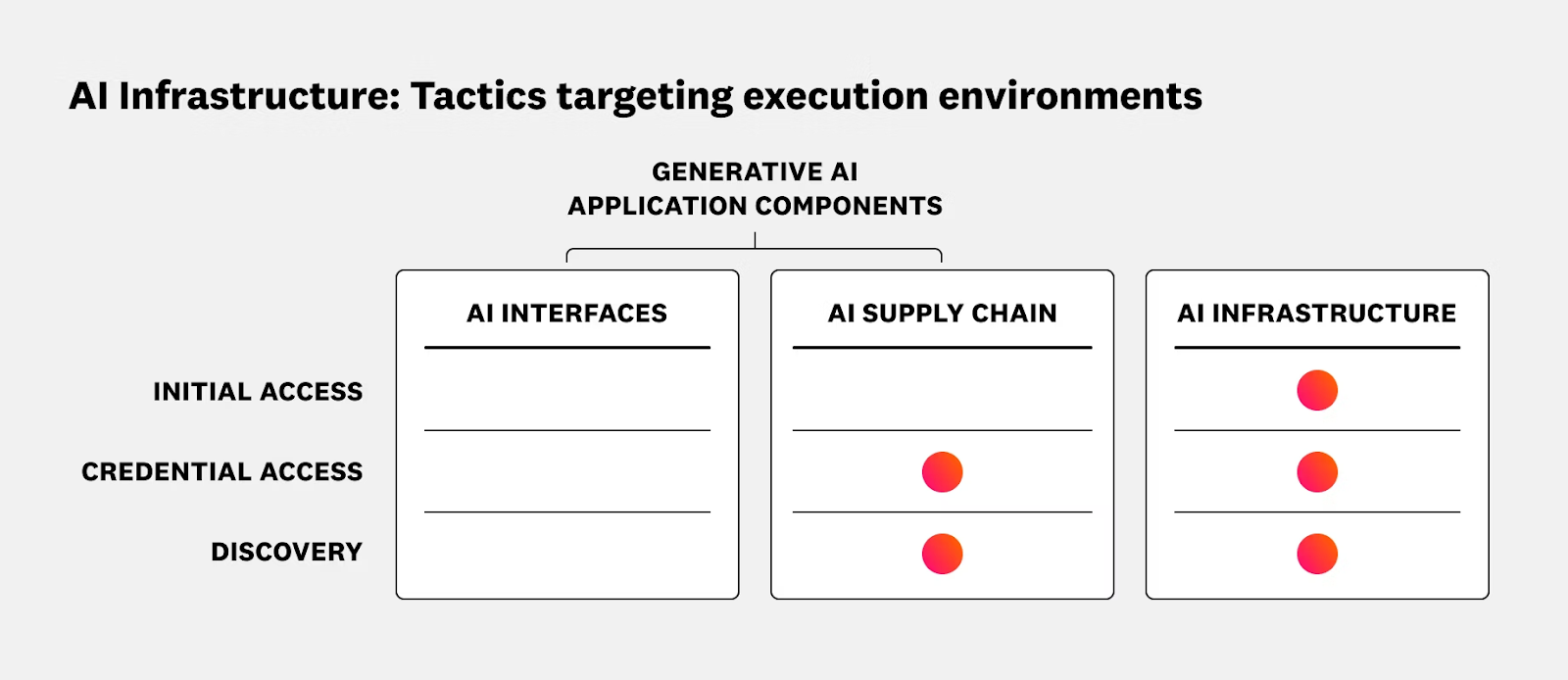

- The AI attack surface spans hardware (CPU/GPU), virtualization, models, data, inference engines, agents, applications, and APIs, with vector stores, inference servers, and over-privileged GPU runtimes as high-risk components.

- Notable recent incidents include LangFlow Server RCE, Nvidia container escape, ShadowRay 2.0 inference server exploit, Keras supply-chain vulnerability, and the IBM Bob agent compromise, showing diverse supply-chain and runtime exploitation methods.

- Effective defenses require early hardening (CI/CD checks, KSPM, least-privilege IAM, trusted images, hardened GPU node pools) plus continuous runtime protection with multi-domain correlation to detect low-and-slow attacks.

- Sysdig’s approach combines runtime insights, agentic AI response, and open innovation, and is integrated with OCI/OKE via blueprints and a Quick Start to bake runtime security and visibility into cluster design.

Read more: https://www.sysdig.com/blog/securing-gpu-accelerated-ai-workloads-in-oracle-kubernetes-engine