AI hallucinations are an unavoidable byproduct of how large language models operate, and recent research aims to predict and reduce these occurrences using mathematical models inspired by physics. While these advancements may not eliminate hallucinations immediately, they could significantly enhance AI reliability and safety in critical applications. #AIHallucinations #MultispinPhysics

Keypoints

- Hallucinations in large language models are an inherent consequence of their operational design.

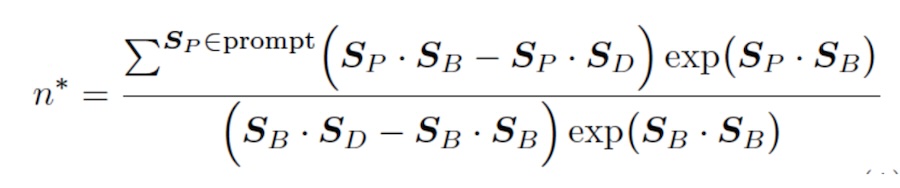

- Researchers are developing mathematical models to predict the point when AI responses become unreliable, known as the tipping point.

- Johnson’s further work introduces design strategies like ‘gap cooling’ and ‘temperature annealing’ to improve model stability.

- Real-time detection and intervention could help mitigate hallucinations and improve AI trustworthiness.

- While promising, these theoretical approaches require further development before practical implementation in AI systems.