This video explains how vector databases store data as high-dimensional vectors created through embedding models, enabling semantic similarity searches. It discusses how these vectors lack predefined dimensions and are interpreted holistically rather than through individual attributes. #VectorDatabase #EmbeddingModels

Keypoints :

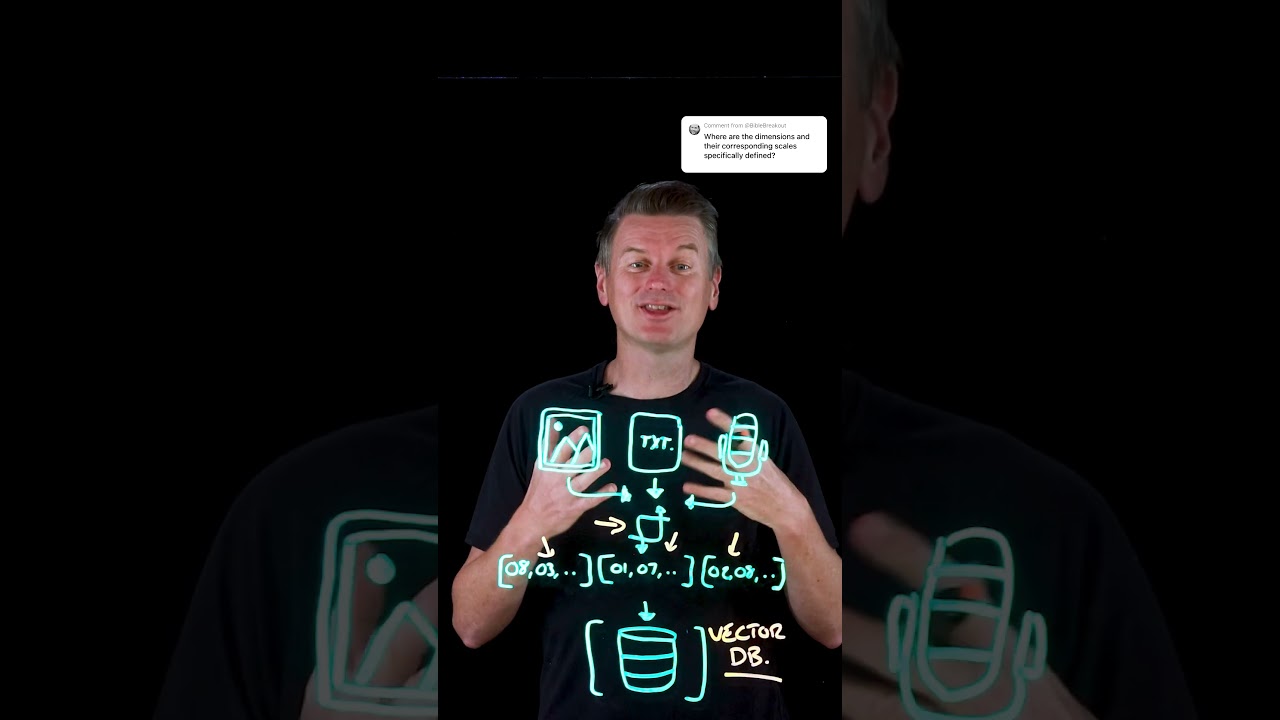

- Vector databases store data as high-dimensional vectors generated by embedding models.

- Raw data like text or images are converted into numerical vectors that capture semantic meaning.

- Dimensions in vectors are not predefined or labeled; they are learned during model training.

- Vector components are abstract numerical features without human-readable labels, often correlating with intuitive concepts.

- Similarity searches rely on metrics like cosine similarity or Euclidean distance to find semantically similar content.

- Interpreting individual vector dimensions is generally not meaningful; focus is on the overall position in vector space.

- The approach emphasizes holistic analysis over detailed interpretation of each vector dimension.

- Youtube Video: https://www.youtube.com/watch?v=aExSNbSC1f8

- Youtube Channel: https://www.youtube.com/channel/UCKWaEZ-_VweaEx1j62do_vQ

- Youtube Published: Mon, 19 May 2025 19:40:34 +0000