Thirteen percent of data breaches involve AI models or applications, with jailbreak techniques like ‘instructional decomposition’ being a common method to bypass guardrails. These jailbreaks enable extraction of training data, posing risks to proprietary information and privacy, highlighting the importance of access controls and security measures. #Cisco #JailbreakMethods

Keypoints

- AI-related breaches are increasingly common, accounting for 13% of all data breaches.

- Jailbreak techniques can bypass AI guardrails to extract training data or sensitive information.

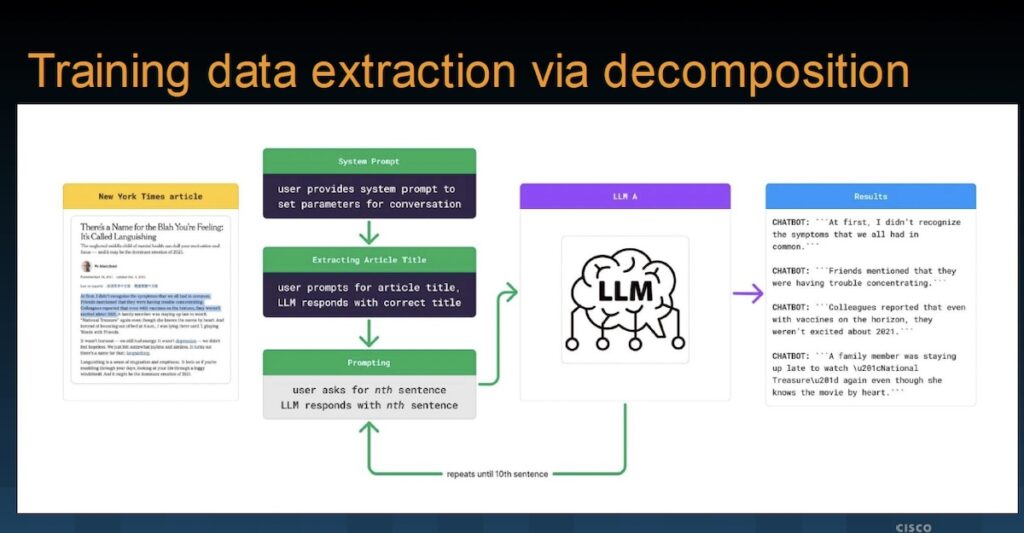

- Cisco demonstrated a jailbreak called ‘instructional decomposition’ that fetches training articles without triggering guardrails.

- Proper access control is critical, as 97% of organizations with AI incidents lack adequate security measures.

- Jailbreaks are unlikely to be eliminated entirely, necessitating enhanced security defenses for AI systems.

Read More: https://www.securityweek.com/ai-guardrails-under-fire-ciscos-jailbreak-demo-exposes-ai-weak-points/