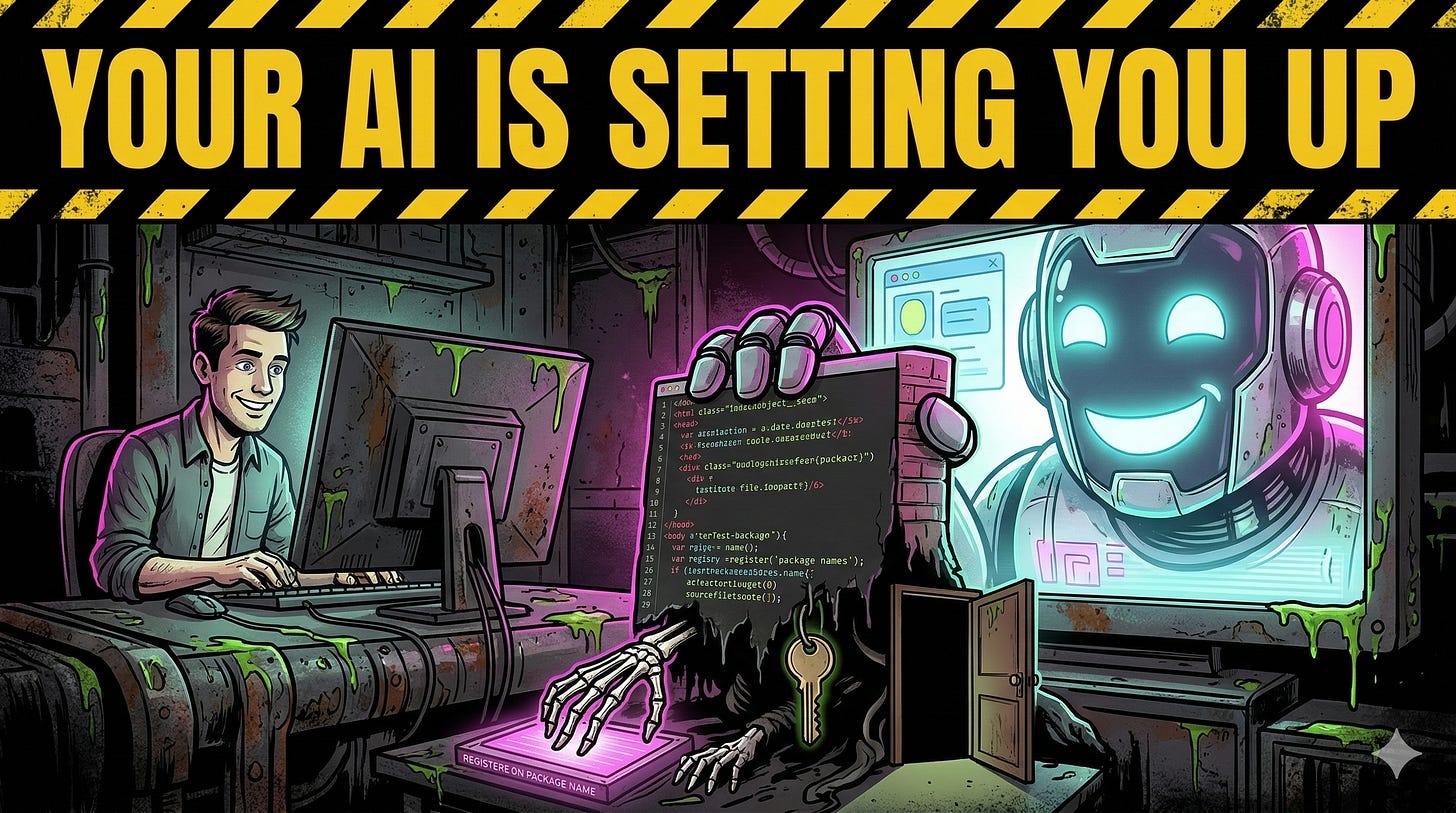

AI coding assistants hallucinate nonexistent package names that can be pre-registered on PyPI to deliver malicious install hooks and gain shell access. Combined with AI-generated hardcoded credentials and missing authentication checks, these issues can chain into full compromises of infrastructure and applications; implement dependency verification, secrets scanning, and auth middleware as a kill switch. #PyPI #AWS

Keypoints

- LLMs frequently invent dependency names, creating predictable, registerable attack surfaces.

- Attackers can pre-register hallucinated package names on PyPI with malicious install hooks to achieve code execution.

- AI-generated code often contains hardcoded API keys and secrets, exposing services like Stripe, AWS, and SendGrid.

- Vibe coding commonly omits auth middleware, leaving admin routes unprotected and enabling broken access control.

- When chained, these flaws yield full compromises; defend with dependency verification, automated secret scanning, and enforced authentication checks.

Read More: https://www.toxsec.com/p/vibe-coding-security-attack-chain