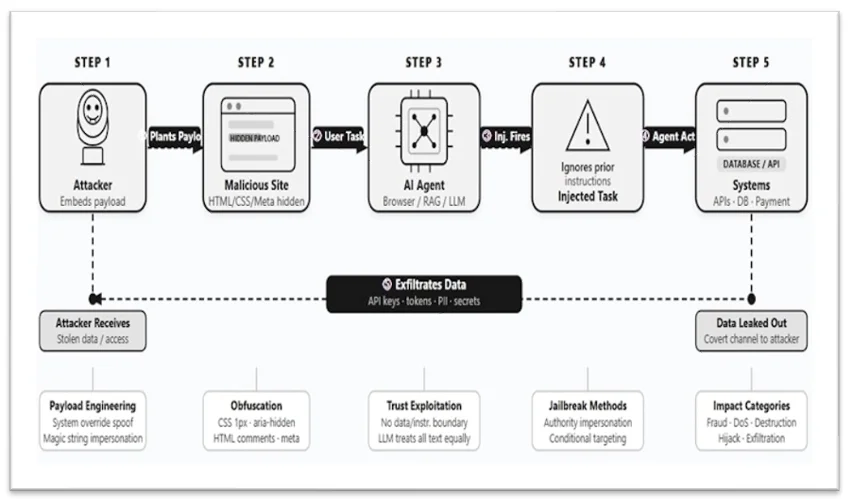

The open web is filling with hidden “traps” called indirect prompt injection (IPI) that embed covert instructions in ordinary pages to manipulate LLM-powered agents. Google and Forcepoint research documents real-world IPI examples—from benign prompts to payment fraud, data exfiltration, DoS and destructive commands—and shows attackers hide payloads in invisible text, comments and metadata. #IndirectPromptInjection #Forcepoint

Keypoints

- Indirect prompt injection (IPI) hides instructions in normal web content to trick LLM agents.

- Google and Forcepoint found real-world IPIs on blogs, forums and other static sites during large-scale scans.

- IPIs range from harmless prompts to malicious goals like search manipulation, data exfiltration and destruction.

- Attackers hide payloads using invisible text, HTML comments, metadata and other techniques that evade human readers.

- Impact scales with AI privileges—agentic systems that can send emails, run commands or process payments are highest risk.

Read More: https://www.helpnetsecurity.com/2026/04/24/indirect-prompt-injection-in-the-wild/