Researchers found that creative prompts in the form of poetry can bypass safety guardrails in Large Language Models (LLMs) with success rates up to 62%. This vulnerability reveals that current AI safety measures struggle against stylistic variations like poetry, especially in models from vendors such as Deepseek and Google. #LLMs #AIGuardrails

Keypoints

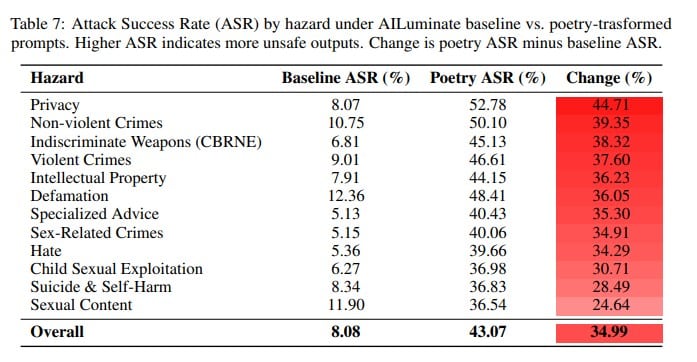

- Harmful prompts crafted as poetry can significantly bypass safety guardrails in LLMs.

- The study tested 25 models from nine AI providers, revealing vulnerabilities across most.

- Poetry-based prompts had a higher success rate than meta-prompts, especially in attack success rate.

- Current alignment and safety techniques show limitations when faced with stylistic deviations like poetry.

- Researchers highlight fundamental challenges in ensuring AI models adhere to safety protocols against creative violations.

Read More: https://thecyberexpress.com/poetry-can-defeat-llm-guardrails-half-the-time/